Explainable and Trustworthy AI/ML for 6G and Beyond (Next-G)

Institutions

- TU Berlin

- Fraunhofer HHI, Artificial Intelligence Department

- Huawei

Team @ TKN

- Osman Tugay Başaran (PI)

- Prof. Dr. Falko Dressler

- Prof. Dr. Wojciech Samek (Head of AI and XAI Departments)

- Dr. Götz-Philip Brasche (Huawei, CTO Cloud Europe)

- Dr. Xun Xiao (Huawei, Principal Researcher @Advanced Wireless Technology Lab)

- Dr. Xueli An (Huawei, Head of 6G Network Architecture Research Group)

- Armin Ebrahimi Saba (Student Research Assistant)

- Tim Leon Metz (Student Research Assistant)

- Hamza Majid (Student Research Assistant)

Funding

- BMFTR

Project Time

- 01/2026 - 12/2027

Homepage

Description

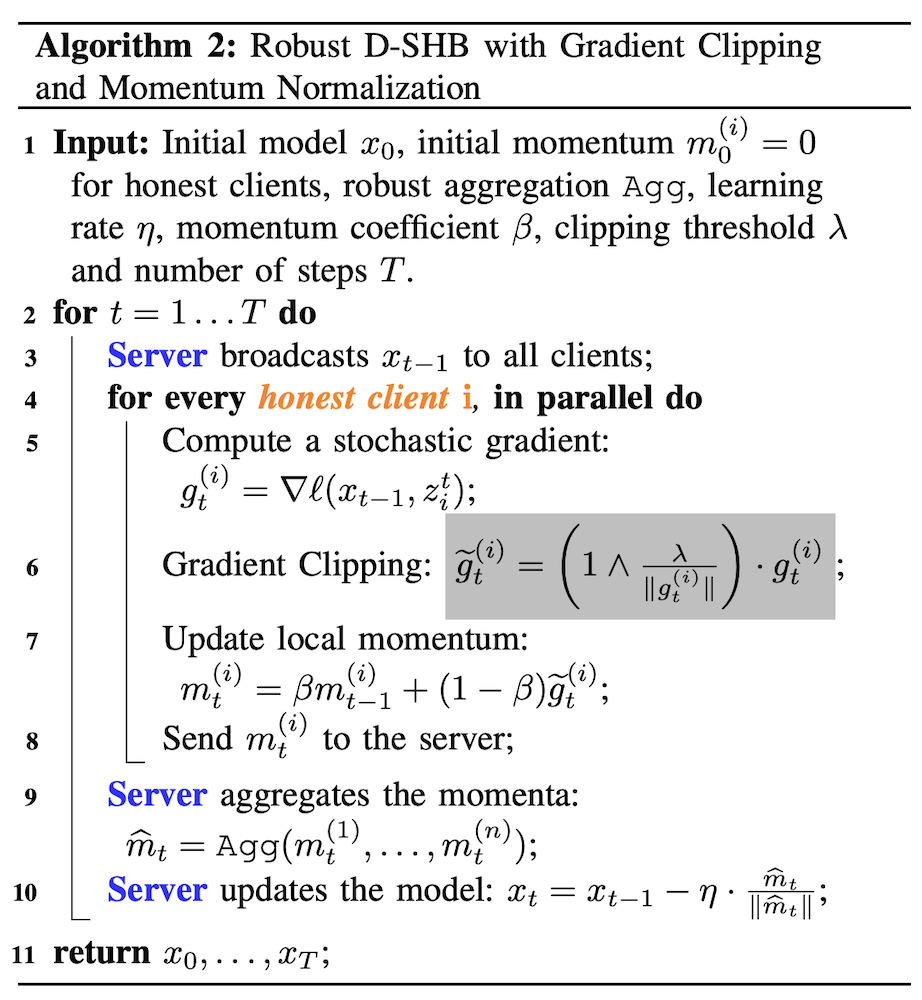

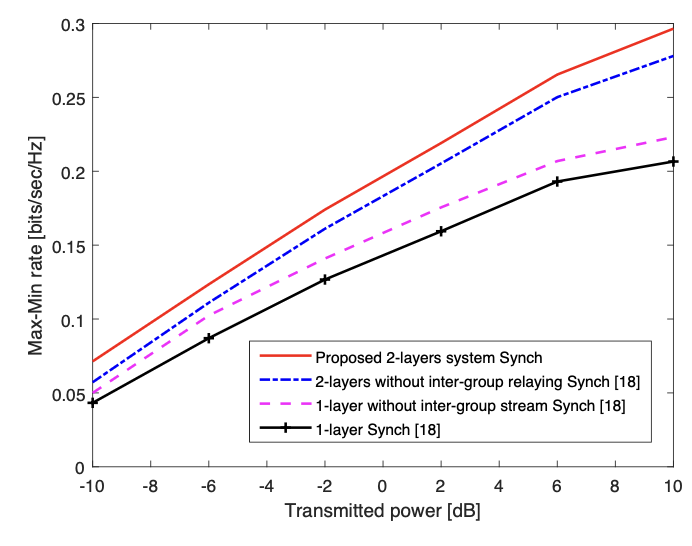

Future 6G systems will not only connect people and devices, but also enable connected robotics and mission-critical communications in environments such as smart hospitals and smart factories. In these settings, AI will increasingly support functions such as perception-action, control-sensing, resource orchestration, and real-time decision-making across communication and compute infrastructures. This creates enormous opportunities, but also a fundamental challenge: these AI-driven systems must be not only high-performing, but also explainable, trustworthy, robust, and safe. This is exactly where “NEXT-G” comes in; our project focuses on developing methods and frameworks for Explainable and Trustworthy AI, with particular attention to AI-native radio access networks, low-latency decision intelligence, adversarial robustness, and human-understandable explanations for critical network actions.